In mathematics, the Euclidean distance between two points in Euclidean space is the length of the line segment between them. It can be calculated from the Cartesian coordinates of the points using the Pythagorean theorem, and therefore is occasionally called the Pythagorean distance.

These names come from the ancient Greek mathematicians Euclid and Pythagoras. In the Greek deductive geometry exemplified by Euclid's Elements, distances were not represented as numbers but line segments of the same length, which were considered "equal". The notion of distance is inherent in the compass tool used to draw a circle, whose points all have the same distance from a common center point. The connection from the Pythagorean theorem to distance calculation was not made until the 18th century.

The distance between two objects that are not points is usually defined to be the smallest distance among pairs of points from the two objects. Formulas are known for computing distances between different types of objects, such as the distance from a point to a line. In advanced mathematics, the concept of distance has been generalized to abstract metric spaces, and other distances than Euclidean have been studied. In some applications in statistics and optimization, the square of the Euclidean distance is used instead of the distance itself.

Distance formulas edit

One dimension edit

The distance between any two points on the real line is the absolute value of the numerical difference of their coordinates, their absolute difference. Thus if and are two points on the real line, then the distance between them is given by:[1]

A more complicated formula, giving the same value, but generalizing more readily to higher dimensions, is:[1]

In this formula, squaring and then taking the square root leaves any positive number unchanged, but replaces any negative number by its absolute value.[1]

Two dimensions edit

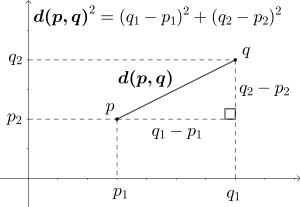

In the Euclidean plane, let point have Cartesian coordinates and let point have coordinates . Then the distance between and is given by:[2]

This can be seen by applying the Pythagorean theorem to a right triangle with horizontal and vertical sides, having the line segment from to as its hypotenuse. The two squared formulas inside the square root give the areas of squares on the horizontal and vertical sides, and the outer square root converts the area of the square on the hypotenuse into the length of the hypotenuse.[3]

It is also possible to compute the distance for points given by polar coordinates. If the polar coordinates of are and the polar coordinates of are , then their distance is[2] given by the law of cosines:

When and are expressed as complex numbers in the complex plane, the same formula for one-dimensional points expressed as real numbers can be used, although here the absolute value sign indicates the complex norm:[4]

Higher dimensions edit

In three dimensions, for points given by their Cartesian coordinates, the distance is

In general, for points given by Cartesian coordinates in -dimensional Euclidean space, the distance is[5]

The Euclidean distance may also be expressed more compactly in terms of the Euclidean norm of the Euclidean vector difference:

Objects other than points edit

For pairs of objects that are not both points, the distance can most simply be defined as the smallest distance between any two points from the two objects, although more complicated generalizations from points to sets such as Hausdorff distance are also commonly used.[6] Formulas for computing distances between different types of objects include:

- The distance from a point to a line, in the Euclidean plane[7]

- The distance from a point to a plane in three-dimensional Euclidean space[7]

- The distance between two lines in three-dimensional Euclidean space[8]

The distance from a point to a curve can be used to define its parallel curve, another curve all of whose points have the same distance to the given curve.[9]

Properties edit

The Euclidean distance is the prototypical example of the distance in a metric space,[10] and obeys all the defining properties of a metric space:[11]

- It is symmetric, meaning that for all points and , . That is (unlike road distance with one-way streets) the distance between two points does not depend on which of the two points is the start and which is the destination.[11]

- It is positive, meaning that the distance between every two distinct points is a positive number, while the distance from any point to itself is zero.[11]

- It obeys the triangle inequality: for every three points , , and , . Intuitively, traveling from to via cannot be any shorter than traveling directly from to .[11]

Another property, Ptolemy's inequality, concerns the Euclidean distances among four points , , , and . It states that

For points in the plane, this can be rephrased as stating that for every quadrilateral, the products of opposite sides of the quadrilateral sum to at least as large a number as the product of its diagonals. However, Ptolemy's inequality applies more generally to points in Euclidean spaces of any dimension, no matter how they are arranged.[12] For points in metric spaces that are not Euclidean spaces, this inequality may not be true. Euclidean distance geometry studies properties of Euclidean distance such as Ptolemy's inequality, and their application in testing whether given sets of distances come from points in a Euclidean space.[13]

According to the Beckman–Quarles theorem, any transformation of the Euclidean plane or of a higher-dimensional Euclidean space that preserves unit distances must be an isometry, preserving all distances.[14]

Squared Euclidean distance edit

In many applications, and in particular when comparing distances, it may be more convenient to omit the final square root in the calculation of Euclidean distances, as the square root does not change the order ( if and only if ). The value resulting from this omission is the square of the Euclidean distance, and is called the squared Euclidean distance.[15] For instance, the Euclidean minimum spanning tree can be determined using only the ordering between distances, and not their numeric values. Comparing squared distances produces the same result but avoids an unnecessary square-root calculation and sidesteps issues of numerical precision.[16] As an equation, the squared distance can be expressed as a sum of squares:

Beyond its application to distance comparison, squared Euclidean distance is of central importance in statistics, where it is used in the method of least squares, a standard method of fitting statistical estimates to data by minimizing the average of the squared distances between observed and estimated values,[17] and as the simplest form of divergence to compare probability distributions.[18] The addition of squared distances to each other, as is done in least squares fitting, corresponds to an operation on (unsquared) distances called Pythagorean addition.[19] In cluster analysis, squared distances can be used to strengthen the effect of longer distances.[15]

Squared Euclidean distance does not form a metric space, as it does not satisfy the triangle inequality.[20] However it is a smooth, strictly convex function of the two points, unlike the distance, which is non-smooth (near pairs of equal points) and convex but not strictly convex. The squared distance is thus preferred in optimization theory, since it allows convex analysis to be used. Since squaring is a monotonic function of non-negative values, minimizing squared distance is equivalent to minimizing the Euclidean distance, so the optimization problem is equivalent in terms of either, but easier to solve using squared distance.[21]

The collection of all squared distances between pairs of points from a finite set may be stored in a Euclidean distance matrix, and is used in this form in distance geometry.[22]

Generalizations edit

In more advanced areas of mathematics, when viewing Euclidean space as a vector space, its distance is associated with a norm called the Euclidean norm, defined as the distance of each vector from the origin. One of the important properties of this norm, relative to other norms, is that it remains unchanged under arbitrary rotations of space around the origin.[23] By Dvoretzky's theorem, every finite-dimensional normed vector space has a high-dimensional subspace on which the norm is approximately Euclidean; the Euclidean norm is the only norm with this property.[24] It can be extended to infinite-dimensional vector spaces as the L2 norm or L2 distance.[25] The Euclidean distance gives Euclidean space the structure of a topological space, the Euclidean topology, with the open balls (subsets of points at less than a given distance from a given point) as its neighborhoods.[26]

Other common distances in real coordinate spaces and function spaces:[27]

- Chebyshev distance (L∞ distance), which measures distance as the maximum of the distances in each coordinate.

- Taxicab distance (L1 distance), also called Manhattan distance, which measures distance as the sum of the distances in each coordinate.

- Minkowski distance (Lp distance), a generalization that unifies Euclidean distance, taxicab distance, and Chebyshev distance.

For points on surfaces in three dimensions, the Euclidean distance should be distinguished from the geodesic distance, the length of a shortest curve that belongs to the surface. In particular, for measuring great-circle distances on the Earth or other spherical or near-spherical surfaces, distances that have been used include the haversine distance giving great-circle distances between two points on a sphere from their longitudes and latitudes, and Vincenty's formulae also known as "Vincent distance" for distance on a spheroid.[28]

History edit

Euclidean distance is the distance in Euclidean space. Both concepts are named after ancient Greek mathematician Euclid, whose Elements became a standard textbook in geometry for many centuries.[29] Concepts of length and distance are widespread across cultures, can be dated to the earliest surviving "protoliterate" bureaucratic documents from Sumer in the fourth millennium BC (far before Euclid),[30] and have been hypothesized to develop in children earlier than the related concepts of speed and time.[31] But the notion of a distance, as a number defined from two points, does not actually appear in Euclid's Elements. Instead, Euclid approaches this concept implicitly, through the congruence of line segments, through the comparison of lengths of line segments, and through the concept of proportionality.[32]

The Pythagorean theorem is also ancient, but it could only take its central role in the measurement of distances after the invention of Cartesian coordinates by René Descartes in 1637. The distance formula itself was first published in 1731 by Alexis Clairaut.[33] Because of this formula, Euclidean distance is also sometimes called Pythagorean distance.[34] Although accurate measurements of long distances on the Earth's surface, which are not Euclidean, had again been studied in many cultures since ancient times (see history of geodesy), the idea that Euclidean distance might not be the only way of measuring distances between points in mathematical spaces came even later, with the 19th-century formulation of non-Euclidean geometry.[35] The definition of the Euclidean norm and Euclidean distance for geometries of more than three dimensions also first appeared in the 19th century, in the work of Augustin-Louis Cauchy.[36]

References edit

- ^ a b c Smith, Karl (2013), Precalculus: A Functional Approach to Graphing and Problem Solving, Jones & Bartlett Publishers, p. 8, ISBN 978-0-7637-5177-7

- ^ a b Cohen, David (2004), Precalculus: A Problems-Oriented Approach (6th ed.), Cengage Learning, p. 698, ISBN 978-0-534-40212-9

- ^ Aufmann, Richard N.; Barker, Vernon C.; Nation, Richard D. (2007), College Trigonometry (6th ed.), Cengage Learning, p. 17, ISBN 978-1-111-80864-8

- ^ Andreescu, Titu; Andrica, Dorin (2014), "3.1.1 The Distance Between Two Points", Complex Numbers from A to ... Z (2nd ed.), Birkhäuser, pp. 57–58, ISBN 978-0-8176-8415-0

- ^ Tabak, John (2014), Geometry: The Language of Space and Form, Facts on File math library, Infobase Publishing, p. 150, ISBN 978-0-8160-6876-0

- ^ Ó Searcóid, Mícheál (2006), "2.7 Distances from Sets to Sets", Metric Spaces, Springer Undergraduate Mathematics Series, Springer, pp. 29–30, ISBN 978-1-84628-627-8

- ^ a b Ballantine, J. P.; Jerbert, A. R. (April 1952), "Distance from a line, or plane, to a point", Classroom notes, American Mathematical Monthly, 59 (4): 242–243, doi:10.2307/2306514, JSTOR 2306514

- ^ Bell, Robert J. T. (1914), "49. The shortest distance between two lines", An Elementary Treatise on Coordinate Geometry of Three Dimensions (2nd ed.), Macmillan, pp. 57–61

- ^ Maekawa, Takashi (March 1999), "An overview of offset curves and surfaces", Computer-Aided Design, 31 (3): 165–173, doi:10.1016/s0010-4485(99)00013-5

- ^ Ivanov, Oleg A. (2013), Easy as π?: An Introduction to Higher Mathematics, Springer, p. 140, ISBN 978-1-4612-0553-1

- ^ a b c d Strichartz, Robert S. (2000), The Way of Analysis, Jones & Bartlett Learning, p. 357, ISBN 978-0-7637-1497-0

- ^ Adam, John A. (2017), "Chapter 2. Introduction to the "Physics" of Rays", Rays, Waves, and Scattering: Topics in Classical Mathematical Physics, Princeton Series in Applied Mathematics, Princeton University Press, pp. 26–27, doi:10.1515/9781400885404-004, ISBN 978-1-4008-8540-4

- ^ Liberti, Leo; Lavor, Carlile (2017), Euclidean Distance Geometry: An Introduction, Springer Undergraduate Texts in Mathematics and Technology, Springer, p. xi, ISBN 978-3-319-60792-4

- ^ Beckman, F. S.; Quarles, D. A. Jr. (1953), "On isometries of Euclidean spaces", Proceedings of the American Mathematical Society, 4 (5): 810–815, doi:10.2307/2032415, JSTOR 2032415, MR 0058193

- ^ a b Spencer, Neil H. (2013), "5.4.5 Squared Euclidean Distances", Essentials of Multivariate Data Analysis, CRC Press, p. 95, ISBN 978-1-4665-8479-2

- ^ Yao, Andrew Chi Chih (1982), "On constructing minimum spanning trees in k-dimensional spaces and related problems", SIAM Journal on Computing, 11 (4): 721–736, doi:10.1137/0211059, MR 0677663

- ^ Randolph, Karen A.; Myers, Laura L. (2013), Basic Statistics in Multivariate Analysis, Pocket Guide to Social Work Research Methods, Oxford University Press, p. 116, ISBN 978-0-19-976404-4

- ^ Csiszár, I. (1975), "I-divergence geometry of probability distributions and minimization problems", Annals of Probability, 3 (1): 146–158, doi:10.1214/aop/1176996454, JSTOR 2959270, MR 0365798

- ^ Moler, Cleve and Donald Morrison (1983), "Replacing Square Roots by Pythagorean Sums" (PDF), IBM Journal of Research and Development, 27 (6): 577–581, CiteSeerX 10.1.1.90.5651, doi:10.1147/rd.276.0577

- ^ Mielke, Paul W.; Berry, Kenneth J. (2000), "Euclidean distance based permutation methods in atmospheric science", in Brown, Timothy J.; Mielke, Paul W. Jr. (eds.), Statistical Mining and Data Visualization in Atmospheric Sciences, Springer, pp. 7–27, doi:10.1007/978-1-4757-6581-6_2

- ^ Kaplan, Wilfred (2011), Maxima and Minima with Applications: Practical Optimization and Duality, Wiley Series in Discrete Mathematics and Optimization, vol. 51, John Wiley & Sons, p. 61, ISBN 978-1-118-03104-9

- ^ Alfakih, Abdo Y. (2018), Euclidean Distance Matrices and Their Applications in Rigidity Theory, Springer, p. 51, ISBN 978-3-319-97846-8

- ^ Kopeikin, Sergei; Efroimsky, Michael; Kaplan, George (2011), Relativistic Celestial Mechanics of the Solar System, John Wiley & Sons, p. 106, ISBN 978-3-527-63457-6

- ^ Matoušek, Jiří (2002), Lectures on Discrete Geometry, Graduate Texts in Mathematics, Springer, p. 349, ISBN 978-0-387-95373-1

- ^ Ciarlet, Philippe G. (2013), Linear and Nonlinear Functional Analysis with Applications, Society for Industrial and Applied Mathematics, p. 173, ISBN 978-1-61197-258-0

- ^ Richmond, Tom (2020), General Topology: An Introduction, De Gruyter, p. 32, ISBN 978-3-11-068657-9

- ^ Klamroth, Kathrin (2002), "Section 1.1: Norms and Metrics", Single-Facility Location Problems with Barriers, Springer Series in Operations Research, Springer, pp. 4–6, doi:10.1007/0-387-22707-5_1

- ^ Panigrahi, Narayan (2014), "12.2.4 Haversine Formula and 12.2.5 Vincenty's Formula", Computing in Geographic Information Systems, CRC Press, pp. 212–214, ISBN 978-1-4822-2314-9

- ^ Zhang, Jin (2007), Visualization for Information Retrieval, Springer, ISBN 978-3-540-75148-9

- ^ Høyrup, Jens (2018), "Mesopotamian mathematics" (PDF), in Jones, Alexander; Taub, Liba (eds.), The Cambridge History of Science, Volume 1: Ancient Science, Cambridge University Press, pp. 58–72, archived from the original (PDF) on May 17, 2021, retrieved October 21, 2020

- ^ Acredolo, Curt; Schmid, Jeannine (1981), "The understanding of relative speeds, distances, and durations of movement", Developmental Psychology, 17 (4): 490–493, doi:10.1037/0012-1649.17.4.490

- ^ Henderson, David W. (2002), "Review of Geometry: Euclid and Beyond by Robin Hartshorne", Bulletin of the American Mathematical Society, 39: 563–571, doi:10.1090/S0273-0979-02-00949-7

- ^ Maor, Eli (2019), The Pythagorean Theorem: A 4,000-Year History, Princeton University Press, pp. 133–134, ISBN 978-0-691-19688-6

- ^ Rankin, William C.; Markley, Robert P.; Evans, Selby H. (March 1970), "Pythagorean distance and the judged similarity of schematic stimuli", Perception & Psychophysics, 7 (2): 103–107, doi:10.3758/bf03210143, S2CID 144797925

- ^ Milnor, John (1982), "Hyperbolic geometry: the first 150 years", Bulletin of the American Mathematical Society, 6 (1): 9–24, doi:10.1090/S0273-0979-1982-14958-8, MR 0634431

- ^ Ratcliffe, John G. (2019), Foundations of Hyperbolic Manifolds, Graduate Texts in Mathematics, vol. 149 (3rd ed.), Springer, p. 32, ISBN 978-3-030-31597-9