This article needs additional citations for verification. (February 2014) |

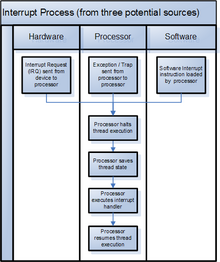

In digital computers, an interrupt (sometimes referred to as a trap)[1] is a request for the processor to interrupt currently executing code (when permitted), so that the event can be processed in a timely manner. If the request is accepted, the processor will suspend its current activities, save its state, and execute a function called an interrupt handler (or an interrupt service routine, ISR) to deal with the event. This interruption is often temporary, allowing the software to resume[a] normal activities after the interrupt handler finishes, although the interrupt could instead indicate a fatal error.[2]

Interrupts are commonly used by hardware devices to indicate electronic or physical state changes that require time-sensitive attention. Interrupts are also commonly used to implement computer multitasking and system calls, especially in real-time computing. Systems that use interrupts in these ways are said to be interrupt-driven.[3]

History edit

Hardware interrupts were introduced as an optimization, eliminating unproductive waiting time in polling loops, waiting for external events. The first system to use this approach was the DYSEAC, completed in 1954, although earlier systems provided error trap functions.[4]

The UNIVAC 1103A computer is generally credited with the earliest use of interrupts in 1953.[5][6] Earlier, on the UNIVAC I (1951) "Arithmetic overflow either triggered the execution of a two-instruction fix-up routine at address 0, or, at the programmer's option, caused the computer to stop." The IBM 650 (1954) incorporated the first occurrence of interrupt masking. The National Bureau of Standards DYSEAC (1954) was the first to use interrupts for I/O. The IBM 704 was the first to use interrupts for debugging, with a "transfer trap", which could invoke a special routine when a branch instruction was encountered. The MIT Lincoln Laboratory TX-2 system (1957) was the first to provide multiple levels of priority interrupts.[6]

Types edit

Interrupt signals may be issued in response to hardware or software events. These are classified as hardware interrupts or software interrupts, respectively. For any particular processor, the number of interrupt types is limited by the architecture.

Hardware interrupts edit

A hardware interrupt is a condition related to the state of the hardware that may be signaled by an external hardware device, e.g., an interrupt request (IRQ) line on a PC, or detected by devices embedded in processor logic (e.g., the CPU timer in IBM System/370), to communicate that the device needs attention from the operating system (OS)[7] or, if there is no OS, from the bare metal program running on the CPU. Such external devices may be part of the computer (e.g., disk controller) or they may be external peripherals. For example, pressing a keyboard key or moving a mouse plugged into a PS/2 port triggers hardware interrupts that cause the processor to read the keystroke or mouse position.

Hardware interrupts can arrive asynchronously with respect to the processor clock, and at any time during instruction execution. Consequently, all incoming hardware interrupt signals are conditioned by synchronizing them to the processor clock, and acted upon only at instruction execution boundaries.

In many systems, each device is associated with a particular IRQ signal. This makes it possible to quickly determine which hardware device is requesting service, and to expedite servicing of that device.

On some older systems, such as the 1964 CDC 3600,[8] all interrupts went to the same location, and the OS used a specialized instruction to determine the highest-priority outstanding unmasked interrupt. On contemporary systems, there is generally a distinct interrupt routine for each type of interrupt (or for each interrupt source), often implemented as one or more interrupt vector tables.

Masking edit

To mask an interrupt is to disable it, so it is deferred[b] or ignored[c] by the processor, while to unmask an interrupt is to enable it.[9]

Processors typically have an internal interrupt mask register,[d] which allows selective enabling[2] (and disabling) of hardware interrupts. Each interrupt signal is associated with a bit in the mask register. On some systems, the interrupt is enabled when the bit is set, and disabled when the bit is clear. On others, the reverse is true, and a set bit disables the interrupt. When the interrupt is disabled, the associated interrupt signal may be ignored by the processor, or it may remain pending. Signals which are affected by the mask are called maskable interrupts.

Some interrupt signals are not affected by the interrupt mask and therefore cannot be disabled; these are called non-maskable interrupts (NMIs). These indicate high-priority events which cannot be ignored under any circumstances, such as the timeout signal from a watchdog timer. With regard to SPARC, the Non-Maskable Interrupt (NMI), despite having the highest priority among interrupts, can be prevented from occurring through the use of an interrupt mask.[10]

Missing interrupts edit

One failure mode is when the hardware does not generate the expected interrupt for a change in state, causing the operating system to wait indefinitely. Depending on the details, the failure might affect only a single process or might have global impact. Some operating systems have code specifically to deal with this.

As an example, IBM Operating System/360 (OS/360) relies on a not-ready to ready device-end interrupt when a tape has been mounted on a tape drive, and will not read the tape label until that interrupt occurs or is simulated. IBM added code in OS/360 so that the VARY ONLINE command will simulate a device end interrupt on the target device.

Spurious interrupts edit

A spurious interrupt is a hardware interrupt for which no source can be found. The term "phantom interrupt" or "ghost interrupt" may also be used to describe this phenomenon. Spurious interrupts tend to be a problem with a wired-OR interrupt circuit attached to a level-sensitive processor input. Such interrupts may be difficult to identify when a system misbehaves.

In a wired-OR circuit, parasitic capacitance charging/discharging through the interrupt line's bias resistor will cause a small delay before the processor recognizes that the interrupt source has been cleared. If the interrupting device is cleared too late in the interrupt service routine (ISR), there will not be enough time for the interrupt circuit to return to the quiescent state before the current instance of the ISR terminates. The result is the processor will think another interrupt is pending, since the voltage at its interrupt request input will be not high or low enough to establish an unambiguous internal logic 1 or logic 0. The apparent interrupt will have no identifiable source, hence the "spurious" moniker.

A spurious interrupt may also be the result of electrical anomalies due to faulty circuit design, high noise levels, crosstalk, timing issues, or more rarely, device errata.[11]

A spurious interrupt may result in system deadlock or other undefined operation if the ISR does not account for the possibility of such an interrupt occurring. As spurious interrupts are mostly a problem with wired-OR interrupt circuits, good programming practice in such systems is for the ISR to check all interrupt sources for activity and take no action (other than possibly logging the event) if none of the sources is interrupting. They may even lead to crashing of the computer in adverse scenarios.

Software interrupts edit

A software interrupt is requested by the processor itself upon executing particular instructions or when certain conditions are met. Every software interrupt signal is associated with a particular interrupt handler.

A software interrupt may be intentionally caused by executing a special instruction which, by design, invokes an interrupt when executed.[e] Such instructions function similarly to subroutine calls and are used for a variety of purposes, such as requesting operating system services and interacting with device drivers (e.g., to read or write storage media). Software interrupts may also be triggered by program execution errors or by the virtual memory system.

Typically, the operating system kernel will catch and handle such interrupts. Some interrupts are handled transparently to the program - for example, the normal resolution of a page fault is to make the required page accessible in physical memory. But in other cases such as a segmentation fault the operating system executes a process callback. On Unix-like operating systems this involves sending a signal such as SIGSEGV, SIGBUS, SIGILL or SIGFPE, which may either call a signal handler or execute a default action (terminating the program). On Windows the callback is made using Structured Exception Handling with an exception code such as STATUS_ACCESS_VIOLATION or STATUS_INTEGER_DIVIDE_BY_ZERO.[12]

In a kernel process, it is often the case that some types of software interrupts are not supposed to happen. If they occur nonetheless, an operating system crash may result.

Terminology edit

The terms interrupt, trap, exception, fault, and abort are used to distinguish types of interrupts, although "there is no clear consensus as to the exact meaning of these terms".[13] The term trap may refer to any interrupt, to any software interrupt, to any synchronous software interrupt, or only to interrupts caused by instructions with trap in their names. In some usages, the term trap refers specifically to a breakpoint intended to initiate a context switch to a monitor program or debugger.[1] It may also refer to a synchronous interrupt caused by an exceptional condition (e.g., division by zero, invalid memory access, illegal opcode),[13] although the term exception is more common for this.

x86 divides interrupts into (hardware) interrupts and software exceptions, and identifies three types of exceptions: faults, traps, and aborts.[14][15] (Hardware) interrupts are interrupts triggered asynchronously by an I/O device, and allow the program to be restarted with no loss of continuity.[14] A fault is restartable as well but is tied to the synchronous execution of an instruction - the return address points to the faulting instruction. A trap is similar to a fault except that the return address points to the instruction to be executed after the trapping instruction;[16] one prominent use is to implement system calls.[15] An abort is used for severe errors, such as hardware errors and illegal values in system tables, and often[f] does not allow a restart of the program.[16]

Arm uses the term exception to refer to all types of interrupts,[17] and divides exceptions into (hardware) interrupts, aborts, reset, and exception-generating instructions. Aborts correspond to x86 exceptions and may be prefetch aborts (failed instruction fetches) or data aborts (failed data accesses), and may be synchronous or asynchronous. Asynchronous aborts may be precise or imprecise. MMU aborts (page faults) are synchronous.[18]

RISC-V uses interrupt as the overall term as well as for the external subset; internal interrupts are called exceptions.

Triggering methods edit

Each interrupt signal input is designed to be triggered by either a logic signal level or a particular signal edge (level transition). Level-sensitive inputs continuously request processor service so long as a particular (high or low) logic level is applied to the input. Edge-sensitive inputs react to signal edges: a particular (rising or falling) edge will cause a service request to be latched; the processor resets the latch when the interrupt handler executes.

Level-triggered edit

A level-triggered interrupt is requested by holding the interrupt signal at its particular (high or low) active logic level. A device invokes a level-triggered interrupt by driving the signal to and holding it at the active level. It negates the signal when the processor commands it to do so, typically after the device has been serviced.

The processor samples the interrupt input signal during each instruction cycle. The processor will recognize the interrupt request if the signal is asserted when sampling occurs.

Level-triggered inputs allow multiple devices to share a common interrupt signal via wired-OR connections. The processor polls to determine which devices are requesting service. After servicing a device, the processor may again poll and, if necessary, service other devices before exiting the ISR.

Edge-triggered edit

An edge-triggered interrupt is an interrupt signaled by a level transition on the interrupt line, either a falling edge (high to low) or a rising edge (low to high). A device wishing to signal an interrupt drives a pulse onto the line and then releases the line to its inactive state. If the pulse is too short to be detected by polled I/O then special hardware may be required to detect it. The important part of edge triggering is that the signal must transition to trigger the interrupt; for example, if the signal was high-low-low, there would only be one falling edge interrupt triggered, and the continued low level would not trigger a further interrupt. The signal must return to the high level and fall again in order to trigger a further interrupt. This contrasts with a level trigger where the low level would continue to create interrupts (if they are enabled) until the signal returns to its high level.

Computers with edge-triggered interrupts may include an interrupt register that retains the status of pending interrupts. Systems with interrupt registers generally have interrupt mask registers as well.

Processor response edit

The processor samples the interrupt trigger signals or interrupt register during each instruction cycle, and will process the highest priority enabled interrupt found. Regardless of the triggering method, the processor will begin interrupt processing at the next instruction boundary following a detected trigger, thus ensuring:

- The processor status[g] is saved in a known manner. Typically the status is stored in a known location, but on some systems it is stored on a stack.

- All instructions before the one pointed to by the PC have fully executed.

- No instruction beyond the one pointed to by the PC has been executed, or any such instructions are undone before handling the interrupt.

- The execution state of the instruction pointed to by the PC is known.

There are several different architectures for handling interrupts. In some, there is a single interrupt handler[19] that must scan for the highest priority enabled interrupt. In others, there are separate interrupt handlers for separate interrupt types,[20] separate I/O channels or devices, or both.[21][22] Several interrupt causes may have the same interrupt type and thus the same interrupt handler, requiring the interrupt handler to determine the cause.[20]

System implementation edit

Interrupts may be fully handled in hardware by the CPU, or may be handled by both the CPU and another component such as a programmable interrupt controller or a southbridge.

If an additional component is used, that component would be connected between the interrupting device and the processor's interrupt pin to multiplex several sources of interrupt onto the one or two CPU lines typically available. If implemented as part of the memory controller, interrupts are mapped into the system's memory address space.[citation needed]

In systems on a chip (SoC) implementations, interrupts come from different blocks of the chip and are usually aggregated in an interrupt controller attached to one or several processors (in a multi-core system).[23]

edit

Multiple devices may share an edge-triggered interrupt line if they are designed to. The interrupt line must have a pull-down or pull-up resistor so that when not actively driven it settles to its inactive state, which is the default state of it. Devices signal an interrupt by briefly driving the line to its non-default state, and let the line float (do not actively drive it) when not signaling an interrupt. This type of connection is also referred to as open collector. The line then carries all the pulses generated by all the devices. (This is analogous to the pull cord on some buses and trolleys that any passenger can pull to signal the driver that they are requesting a stop.) However, interrupt pulses from different devices may merge if they occur close in time. To avoid losing interrupts the CPU must trigger on the trailing edge of the pulse (e.g. the rising edge if the line is pulled up and driven low). After detecting an interrupt the CPU must check all the devices for service requirements.

Edge-triggered interrupts do not suffer the problems that level-triggered interrupts have with sharing. Service of a low-priority device can be postponed arbitrarily, while interrupts from high-priority devices continue to be received and get serviced. If there is a device that the CPU does not know how to service, which may raise spurious interrupts, it will not interfere with interrupt signaling of other devices. However, it is easy for an edge-triggered interrupt to be missed - for example, when interrupts are masked for a period - and unless there is some type of hardware latch that records the event it is impossible to recover. This problem caused many "lockups" in early computer hardware because the processor did not know it was expected to do something. More modern hardware often has one or more interrupt status registers that latch interrupts requests; well-written edge-driven interrupt handling code can check these registers to ensure no events are missed.

The elderly Industry Standard Architecture (ISA) bus uses edge-triggered interrupts, without mandating that devices be able to share IRQ lines, but all mainstream ISA motherboards include pull-up resistors on their IRQ lines, so well-behaved ISA devices sharing IRQ lines should just work fine. The parallel port also uses edge-triggered interrupts. Many older devices assume that they have exclusive use of IRQ lines, making it electrically unsafe to share them.

There are 3 ways multiple devices "sharing the same line" can be raised. First is by exclusive conduction (switching) or exclusive connection (to pins). Next is by bus (all connected to the same line listening): cards on a bus must know when they are to talk and not talk (i.e., the ISA bus). Talking can be triggered in two ways: by accumulation latch or by logic gates. Logic gates expect a continual data flow that is monitored for key signals. Accumulators only trigger when the remote side excites the gate beyond a threshold, thus no negotiated speed is required. Each has its speed versus distance advantages. A trigger, generally, is the method in which excitation is detected: rising edge, falling edge, threshold (oscilloscope can trigger a wide variety of shapes and conditions).

Triggering for software interrupts must be built into the software (both in OS and app). A 'C' app has a trigger table (a table of functions) in its header, which both the app and OS know of and use appropriately that is not related to hardware. However do not confuse this with hardware interrupts which signal the CPU (the CPU enacts software from a table of functions, similarly to software interrupts).

Difficulty with sharing interrupt lines edit

Multiple devices sharing an interrupt line (of any triggering style) all act as spurious interrupt sources with respect to each other. With many devices on one line, the workload in servicing interrupts grows in proportion to the square of the number of devices. It is therefore preferred to spread devices evenly across the available interrupt lines. Shortage of interrupt lines is a problem in older system designs where the interrupt lines are distinct physical conductors. Message-signaled interrupts, where the interrupt line is virtual, are favored in new system architectures (such as PCI Express) and relieve this problem to a considerable extent.

Some devices with a poorly designed programming interface provide no way to determine whether they have requested service. They may lock up or otherwise misbehave if serviced when they do not want it. Such devices cannot tolerate spurious interrupts, and so also cannot tolerate sharing an interrupt line. ISA cards, due to often cheap design and construction, are notorious for this problem. Such devices are becoming much rarer, as hardware logic becomes cheaper and new system architectures mandate shareable interrupts.

Hybrid edit

Some systems use a hybrid of level-triggered and edge-triggered signaling. The hardware not only looks for an edge, but it also verifies that the interrupt signal stays active for a certain period of time.

A common use of a hybrid interrupt is for the NMI (non-maskable interrupt) input. Because NMIs generally signal major – or even catastrophic – system events, a good implementation of this signal tries to ensure that the interrupt is valid by verifying that it remains active for a period of time. This 2-step approach helps to eliminate false interrupts from affecting the system.

Message-signaled edit

A message-signaled interrupt does not use a physical interrupt line. Instead, a device signals its request for service by sending a short message over some communications medium, typically a computer bus. The message might be of a type reserved for interrupts, or it might be of some pre-existing type such as a memory write.

Message-signalled interrupts behave very much like edge-triggered interrupts, in that the interrupt is a momentary signal rather than a continuous condition. Interrupt-handling software treats the two in much the same manner. Typically, multiple pending message-signaled interrupts with the same message (the same virtual interrupt line) are allowed to merge, just as closely spaced edge-triggered interrupts can merge.

Message-signalled interrupt vectors can be shared, to the extent that the underlying communication medium can be shared. No additional effort is required.

Because the identity of the interrupt is indicated by a pattern of data bits, not requiring a separate physical conductor, many more distinct interrupts can be efficiently handled. This reduces the need for sharing. Interrupt messages can also be passed over a serial bus, not requiring any additional lines.

PCI Express, a serial computer bus, uses message-signaled interrupts exclusively.

Doorbell edit

In a push button analogy applied to computer systems, the term doorbell or doorbell interrupt is often used to describe a mechanism whereby a software system can signal or notify a computer hardware device that there is some work to be done. Typically, the software system will place data in some well-known and mutually agreed upon memory locations, and "ring the doorbell" by writing to a different memory location. This different memory location is often called the doorbell region, and there may even be multiple doorbells serving different purposes in this region. It is this act of writing to the doorbell region of memory that "rings the bell" and notifies the hardware device that the data are ready and waiting. The hardware device would now know that the data are valid and can be acted upon. It would typically write the data to a hard disk drive, or send them over a network, or encrypt them, etc.

The term doorbell interrupt is usually a misnomer. It is similar to an interrupt, because it causes some work to be done by the device; however, the doorbell region is sometimes implemented as a polled region, sometimes the doorbell region writes through to physical device registers, and sometimes the doorbell region is hardwired directly to physical device registers. When either writing through or directly to physical device registers, this may cause a real interrupt to occur at the device's central processor unit (CPU), if it has one.

Doorbell interrupts can be compared to Message Signaled Interrupts, as they have some similarities.

Multiprocessor IPI edit

In multiprocessor systems, a processor may send an interrupt request to another processor via inter-processor interrupts[h] (IPI).

Performance edit

Interrupts provide low overhead and good latency at low load, but degrade significantly at high interrupt rate unless care is taken to prevent several pathologies. The phenomenon where the overall system performance is severely hindered by excessive amounts of processing time spent handling interrupts is called an interrupt storm.

There are various forms of livelocks, when the system spends all of its time processing interrupts to the exclusion of other required tasks. Under extreme conditions, a large number of interrupts (like very high network traffic) may completely stall the system. To avoid such problems, an operating system must schedule network interrupt handling as carefully as it schedules process execution.[24]

With multi-core processors, additional performance improvements in interrupt handling can be achieved through receive-side scaling (RSS) when multiqueue NICs are used. Such NICs provide multiple receive queues associated to separate interrupts; by routing each of those interrupts to different cores, processing of the interrupt requests triggered by the network traffic received by a single NIC can be distributed among multiple cores. Distribution of the interrupts among cores can be performed automatically by the operating system, or the routing of interrupts (usually referred to as IRQ affinity) can be manually configured.[25][26]

A purely software-based implementation of the receiving traffic distribution, known as receive packet steering (RPS), distributes received traffic among cores later in the data path, as part of the interrupt handler functionality. Advantages of RPS over RSS include no requirements for specific hardware, more advanced traffic distribution filters, and reduced rate of interrupts produced by a NIC. As a downside, RPS increases the rate of inter-processor interrupts (IPIs). Receive flow steering (RFS) takes the software-based approach further by accounting for application locality; further performance improvements are achieved by processing interrupt requests by the same cores on which particular network packets will be consumed by the targeted application.[25][27][28]

Typical uses edit

Interrupts are commonly used to service hardware timers, transfer data to and from storage (e.g., disk I/O) and communication interfaces (e.g., UART, Ethernet), handle keyboard and mouse events, and to respond to any other time-sensitive events as required by the application system. Non-maskable interrupts are typically used to respond to high-priority requests such as watchdog timer timeouts, power-down signals and traps.

Hardware timers are often used to generate periodic interrupts. In some applications, such interrupts are counted by the interrupt handler to keep track of absolute or elapsed time, or used by the OS task scheduler to manage execution of running processes, or both. Periodic interrupts are also commonly used to invoke sampling from input devices such as analog-to-digital converters, incremental encoder interfaces, and GPIO inputs, and to program output devices such as digital-to-analog converters, motor controllers, and GPIO outputs.

A disk interrupt signals the completion of a data transfer from or to the disk peripheral; this may cause a process to run which is waiting to read or write. A power-off interrupt predicts imminent loss of power, allowing the computer to perform an orderly shut-down while there still remains enough power to do so. Keyboard interrupts typically cause keystrokes to be buffered so as to implement typeahead.

Interrupts are sometimes used to emulate instructions which are unimplemented on some computers in a product family.[29] For example floating point instructions may be implemented in hardware on some systems and emulated on lower-cost systems. In the latter case, execution of an unimplemented floating point instruction will cause an "illegal instruction" exception interrupt. The interrupt handler will implement the floating point function in software and then return to the interrupted program as if the hardware-implemented instruction had been executed.[30] This provides application software portability across the entire line.

Interrupts are similar to signals, the difference being that signals are used for inter-process communication (IPC), mediated by the kernel (possibly via system calls) and handled by processes, while interrupts are mediated by the processor and handled by the kernel. The kernel may pass an interrupt as a signal to the process that caused it (typical examples are SIGSEGV, SIGBUS, SIGILL and SIGFPE).

See also edit

- Advanced Programmable Interrupt Controller (APIC)

- BIOS interrupt call

- Event-driven programming

- Exception handling

- INT (x86 instruction)

- Interrupt coalescing

- Interrupt handler

- Interrupt latency

- Interrupts in 65xx processors

- Ralf Brown's Interrupt List

- Interrupts on IBM System/360 architecture

- Time-triggered system

- Autonomous peripheral operation

Notes edit

- ^ The operating system might resume the interrupted process or might switch to a different process.

- ^ Typically, interrupt events associated with I/O remain pending until the interrupt is enabled or explicitly cleared, e.g., by the Test Pending Interruption (TPI) instruction of IBM System/370-XA and later.

- ^ E.g., when program mask bits on the IBM System/360 are 0 (disabled), the corresponding overflow and significance events do not result in a pending interrupt.

- ^ The mask register may be a single register or multiple registers, e.g., bits in the PSW and other bits in control registers.

- ^ See INT (x86 instruction)

- ^ Some operating systems can recover from severe errors, e.g., paging in a page from a paging file after an uncorrectable ECC error in an unaltered page.

- ^ This might be just the Program Counter (PC), a PSW or multiple registers.

- ^ Known as shoulder taps on some IBM operating systems.

References edit

- ^ a b "The Jargon File, version 4.4.7". 2003-10-27. Retrieved 20 January 2022.

- ^ a b Jonathan Corbet; Alessandro Rubini; Greg Kroah-Hartman (2005). "Linux Device Drivers, Third Edition, Chapter 10. Interrupt Handling" (PDF). O'Reilly Media. p. 269. Retrieved December 25, 2014.

Then it's just a matter of cleaning up, running software interrupts, and getting back to regular work. The "regular work" may well have changed as a result of an interrupt (the handler could

wake_upa process, for example), so the last thing that happens on return from an interrupt is a possible rescheduling of the processor. - ^ Rosenthal, Scott (May 1995). "Basics of Interrupts". Archived from the original on 2016-04-26. Retrieved 2010-11-11.

- ^ Codd, Edgar F. "Multiprogramming". Advances in Computers. 3: 82.

- ^ Bell, C. Gordon; Newell, Allen (1971). Computer structures: readings and examples. McGraw-Hill. p. 46. ISBN 9780070043572. Retrieved Feb 18, 2019.

- ^ a b Smotherman, Mark. "Interrupts". Retrieved 22 December 2021.

- ^ "Hardware interrupts". Retrieved 2014-02-09.

- ^ "Interrupt Instructions". Control Data 3600 Computer System Reference Manual (PDF). Control Data Corporation. July 1964. pp. 4–6. 60021300.

- ^ Bai, Ying (2017). Microcontroller Engineering with MSP432: Fundamentals and Applications. CRC Press. p. 21. ISBN 978-1-4987-7298-3. LCCN 2016020120.

In Cortex-M4 system, the interrupts and exceptions have the following properties: ... Generally, a single bit in a mask register is used to mask (disable) or unmask (enable) certain interrupt/exceptions to occur

- ^ "Interrupt Levels". Retrieved 2023-11-17.

- ^ Li, Qing; Yao, Caroline (2003). Real-Time Concepts for Embedded Systems. CRC Press. p. 163. ISBN 1482280825.

- ^ "Hardware exceptions". docs.microsoft.com. 3 August 2021.

- ^ a b Hyde, Randall (1996). "Chapter Seventeen: Interrupts, Traps and Exceptions (Part 1)". The Art Of Assembly Language Programming. Retrieved 22 December 2021.

The concept of an interrupt is something that has expanded in scope over the years. The 80x86 family has only added to the confusion surrounding interrupts by introducing the int (software interrupt) instruction. Indeed different manufacturers have used terms like exceptions faults aborts traps and interrupts to describe the phenomena this chapter discusses. Unfortunately there is no clear consensus as to the exact meaning of these terms. Different authors adopt different terms to their own use.

- ^ a b "Intel® 64 and IA-32 Architectures Software Developer's Manual Volume 1: Basic Architecture". pp. 6–12 Vol. 1. Retrieved 22 December 2021.

- ^ a b Bryant, Randal E.; O’Hallaron, David R. (2016). "8.1.2 Classes of exceptions". Computer systems: a programmer's perspective (Third, Global ed.). Harlow: Pearson. ISBN 978-1-292-10176-7.

- ^ a b "Intel® 64 and IA-32 architectures software developer's manual volume 3A: System programming guide, part 1". p. 6-5 Vol. 3A. Retrieved 22 December 2021.

- ^ "Exception Handling". developer.arm.com. ARM Cortex-A Series Programmer's Guide for ARMv7-A. Retrieved 21 January 2022.

- ^ "Types of exception". developer.arm.com. ARM Cortex-A Series Programmer's Guide for ARMv7-A. Retrieved 22 December 2021.

- ^ Control Data 6400/6500/6600 Computer Systems Reference Manual (PDF). Revision K. Control Data Corporation. October 11, 1966. 60021300K. Retrieved May 17, 2023.

- ^ a b IBM System/360 Principles of Operation (PDF) (Eighth ed.). IBM. September 1968. p. 77. A22-6821-7.

- ^ PDP-11 Processor Handbook PDP11/04//34a/44/60/70 (PDF). Digital Equipment Corporation. 1979. pp. 128–131.

- ^ PDP-11 Peripherals and Interfacing Handbook (PDF). Digital Equipment Corporation. p. 4.

- ^ Yiu, Joseph (2010-01-01), Yiu, Joseph (ed.), "CHAPTER 2 - Overview of the Cortex-M3", The Definitive Guide to the ARM Cortex-M3 (Second Edition), Oxford: Newnes, pp. 11–24, doi:10.1016/b978-1-85617-963-8.00005-3, ISBN 978-1-85617-963-8, retrieved 2023-10-11

- ^ Mogul, Jeffrey C.; Ramakrishnan, K. K. (1997). "Eliminating receive livelock in an interrupt-driven kernel". ACM Transactions on Computer Systems. 15 (3): 217–252. doi:10.1145/263326.263335. S2CID 215749380. Retrieved 2010-11-11.

- ^ a b Tom Herbert; Willem de Bruijn (May 9, 2014). "Documentation/networking/scaling.txt". Linux kernel documentation. kernel.org. Retrieved November 16, 2014.

- ^ "Intel 82574 Gigabit Ethernet Controller Family Datasheet" (PDF). Intel. June 2014. p. 1. Retrieved November 16, 2014.

- ^ Jonathan Corbet (November 17, 2009). "Receive packet steering". LWN.net. Retrieved November 16, 2014.

- ^ Jake Edge (April 7, 2010). "Receive flow steering". LWN.net. Retrieved November 16, 2014.

- ^ Thusoo, Shalesh; et al. "Patent US 5632028 A". Google Patents. Retrieved Aug 13, 2017.

- ^ Altera Corporation (2009). Nios II Processor Reference (PDF). p. 4. Retrieved Aug 13, 2017.

External links edit

- Interrupts Made Easy

- Interrupts for Microchip PIC Microcontroller

- IBM PC Interrupt Table

- University of Alberta CMPUT 296 Concrete Computing Notes on Interrupts, archived from the original on March 13, 2012

- Arduino Pin change Interrupts - Article by Adityapratap Singh