OpenVZ (Open Virtuozzo) is an operating-system-level virtualization technology for Linux. It allows a physical server to run multiple isolated operating system instances, called containers, virtual private servers (VPSs), or virtual environments (VEs). OpenVZ is similar to Solaris Containers and LXC.

| |

| |

| Developer(s) | Virtuozzo and OpenVZ community |

|---|---|

| Initial release | 2005 |

| Repository | |

| Written in | C |

| Operating system | Linux |

| Platform | x86, x86-64 |

| Available in | English |

| Type | OS-level virtualization |

| License | GPLv2 |

| Website | openvz |

OpenVZ compared to other virtualization technologies edit

While virtualization technologies such as VMware, Xen and KVM provide full virtualization and can run multiple operating systems and different kernel versions, OpenVZ uses a single Linux kernel and therefore can run only Linux. All OpenVZ containers share the same architecture and kernel version. This can be a disadvantage in situations where guests require different kernel versions than that of the host. However, as it does not have the overhead of a true hypervisor, it is very fast and efficient.[1]

Memory allocation with OpenVZ is soft in that memory not used in one virtual environment can be used by others or for disk caching. While old versions of OpenVZ used a common file system (where each virtual environment is just a directory of files that is isolated using chroot), current versions of OpenVZ allow each container to have its own file system.[2]

Kernel edit

The OpenVZ kernel is a Linux kernel, modified to add support for OpenVZ containers. The modified kernel provides virtualization, isolation, resource management, and checkpointing. As of vzctl 4.0, OpenVZ can work with unpatched Linux 3.x kernels, with a reduced feature set.[3]

Virtualization and isolation edit

Each container is a separate entity, and behaves largely as a physical server would. Each has its own:

- Files

- System libraries, applications, virtualized

/procand/sys, virtualized locks, etc. - Users and groups

- Each container has its own root user, as well as other users and groups.

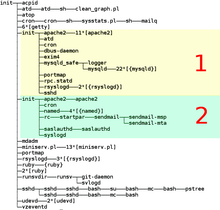

- Process tree

- A container only sees its own processes (starting from

init). PIDs are virtualized, so that the init PID is 1 as it should be. - Network

- Virtual network device, which allows a container to have its own IP addresses, as well as a set of netfilter (

iptables), and routing rules. - Devices

- If needed, any container can be granted access to real devices like network interfaces, serial ports, disk partitions, etc.

- IPC objects

- Shared memory, semaphores, messages.

Resource management edit

OpenVZ resource management consists of four components: two-level disk quota, fair CPU scheduler, disk I/O scheduler, and user bean counters (see below). These resources can be changed during container run time, eliminating the need to reboot.

- Two-level disk quota

- Each container can have its own disk quotas, measured in terms of disk blocks and inodes (roughly number of files). Within the container, it is possible to use standard tools to set UNIX per-user and per-group disk quotas.

- CPU scheduler

- The CPU scheduler in OpenVZ is a two-level implementation of fair-share scheduling strategy.On the first level, the scheduler decides which container it is to give the CPU time slice to, based on per-container cpuunits values. On the second level the standard Linux scheduler decides which process to run in that container, using standard Linux process priorities. It is possible to set different values for the CPUs in each container. Real CPU time will be distributed proportionally to these values. In addition, OpenVZ provides ways to set strict CPU limits, such as 10% of a total CPU time (

--cpulimit), limit number of CPU cores available to container (--cpus), and bind a container to a specific set of CPUs (--cpumask).[4] - I/O scheduler

- Similar to the CPU scheduler described above, I/O scheduler in OpenVZ is also two-level, utilizing Jens Axboe's CFQ I/O scheduler on its second level. Each container is assigned an I/O priority, and the scheduler distributes the available I/O bandwidth according to the priorities assigned. Thus no single container can saturate an I/O channel.

- User Beancounters

- User Beancounters is a set of per-container counters, limits, and guarantees, meant to prevent a single container from monopolizing system resources. In current OpenVZ kernels (RHEL6-based 042stab*) there are two primary parameters, and others are optional.[5] Other resources are mostly memory and various in-kernel objects such as Inter-process communication shared memory segments and network buffers. Each resource can be seen from

/proc/user_beancountersand has five values associated with it: current usage, maximum usage (for the lifetime of a container), barrier, limit, and fail counter. The meaning of barrier and limit is parameter-dependent; in short, those can be thought of as a soft limit and a hard limit. If any resource hits the limit, the fail counter for it is increased. This allows the owner to detect problems by monitoring /proc/user_beancounters in the container.

Checkpointing and live migration edit

A live migration and checkpointing feature was released for OpenVZ in the middle of April 2006. This makes it possible to move a container from one physical server to another without shutting down the container. The process is known as checkpointing: a container is frozen and its whole state is saved to a file on disk. This file can then be transferred to another machine and a container can be unfrozen (restored) there; the delay is roughly a few seconds. Because state is usually preserved completely, this pause may appear to be an ordinary computational delay.

Limitations edit

By default, OpenVZ restricts container access to real physical devices (thus making a container hardware-independent). An OpenVZ administrator can enable container access to various real devices, such as disk drives, USB ports,[6] PCI devices[7] or physical network cards.[8]

/dev/loopN is often restricted in deployments (as loop devices use kernel threads which might be a security issue), which restricts the ability to mount disk images. A work-around is to use FUSE.

OpenVZ is limited to providing only some VPN technologies based on PPP (such as PPTP/L2TP) and TUN/TAP. IPsec is supported inside containers since kernel 2.6.32.

A graphical user interface called EasyVZ was attempted in 2007,[9] but it did not progress beyond version 0.1. Up to version 3.4, Proxmox VE could be used as an OpenVZ-based server virtualization environment with a GUI, although later versions switched to LXC.

See also edit

References edit

- ^ "Performance Evaluation of Virtualization Technologies for Server Consolidation". Archived from the original on 2009-01-15.

- ^ "Ploop - OpenVZ Linux Containers Wiki". Archived from the original on 2012-03-26.

- ^ Kolyshkin, Kir (6 October 2012). "OpenVZ turns 7, gifts are available!". OpenVZ Blog. Retrieved 2013-01-17.

- ^ vzctl(8) man page, CPU fair scheduler parameters section, http://openvz.org/Man/vzctl.8#CPU_fair_scheduler_parameters Archived 2017-04-14 at the Wayback Machine

- ^ "VSwap - OpenVZ Linux Containers Wiki". Archived from the original on 2013-02-13.

- ^ vzctl(8) man page, Device access management subsection, http://wiki.openvz.org/Man/vzctl.8#Device_access_management

- ^ vzctl(8) man page, PCI device management section, http://wiki.openvz.org/Man/vzctl.8#PCI_device_management

- ^ vzctl(8) man page, Network devices section, http://wiki.openvz.org/Man/vzctl.8#Network_devices_control_parameters

- ^ EasyVZ: Grafische Verwaltung für OpenVZ. Frontend für freie Linux-Virtualisierung